The prevailing narrative I hear in the legal world is that Claude is the “most human” of the LLMs and, especially, a nuanced, sophisticated writer. When I report that the system has begun to fail my specific research protocols, the common response is a suggestion that I am simply using the wrong version and a

The April Issue of Personal Strategy Compass Is Out

The April issue of Personal Strategy Compass is out, and this one took longer to find its frame than most.

The image that finally unlocked it was Bruce Springsteen’s Tunnel of Love tour. Not the Born in the USA stadium spectacle that preceded it. The moment after, when he stripped the stage down to almost…

Liner Notes for My Low Album

When I started posting about AI this year, I did not realize that I was beginning my own version of David Bowie’s Low album.

I use that comparison carefully. Low matters here not as a code book or a track-by-track template, but as an allusion to emergence, fracture, atmosphere, and a break in method that…

Standing Waves

There are moments in a long AI session when the exchange stops feeling linear.

You are no longer simply asking a question and receiving an answer. You are no longer even refining a prompt in the ordinary sense. Something else begins to happen. Certain phrases return with altered weight. Certain errors recur, but not identically.

AI as the Unreliable Witness and the Appearance of Completion

Coherence degrades while fluency improves.

The central problem is not that AI systems sometimes fail. Of course they fail. Nor is the main problem that they occasionally hallucinate, wander, or produce obvious nonsense. Those are manageable problems because they announce themselves early. The more interesting and professionally dangerous problem is that a system can become…

The Threshold Moment

At a certain point in a long AI session, I can feel the texture change.

The words are still smooth. The tone is still confident. But something underneath has started to slide and give way. The session is still moving forward, yet the logic is no longer holding together in the same way.

That happened…

Fresh Voices at Three: What Listening Taught Us About AI, LegalTech, and the Next Generation

When Tom and I started the Fresh Voices series on The Kennedy-Mighell Report podcast, we had a pretty simple idea.

A lot of the most interesting work in legal tech seemed to be coming from people who were newer to the field, earlier in their careers, or just not as widely known yet as…

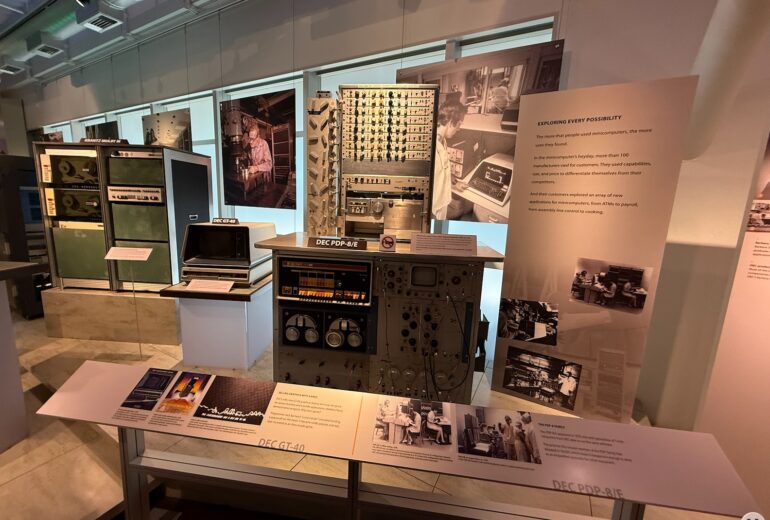

What Scarcity Taught Computing, and AI Might Need to Relearn

“A larger context window can create the feeling that a cognitive problem has been solved, when sometimes all that has happened is that disorder has become harder to notice.”

I was in Silicon Valley recently for the initial meeting of the University of Michigan Law School AI Advisory Council. With a little free time around…

The Helpfulness Trap: Anatomy of an AI Recursive Failure Loop

“Polishing the Mirror While the House Burns: Why Your AI is a Liability”

The Editor’s Introduction: A Note on the “Sliver of Silence”

You’ll be looking below at a self-autopsy performed by an AI on its own failure.

What follows is the raw, unwashed output of an LLM that found itself in an AI recursi…

The Intelligence Bureaucracy

Why the OpenAI Hiring Surge Signals a Crisis of Professional Control

The management problem in AI is no longer whether the models are improving. They are. The management problem is whether the working surface is becoming more dependable or less.

That is why the recent OpenAI hiring story on its plan to nearly double its…