“A larger context window can create the feeling that a cognitive problem has been solved, when sometimes all that has happened is that disorder has become harder to notice.”

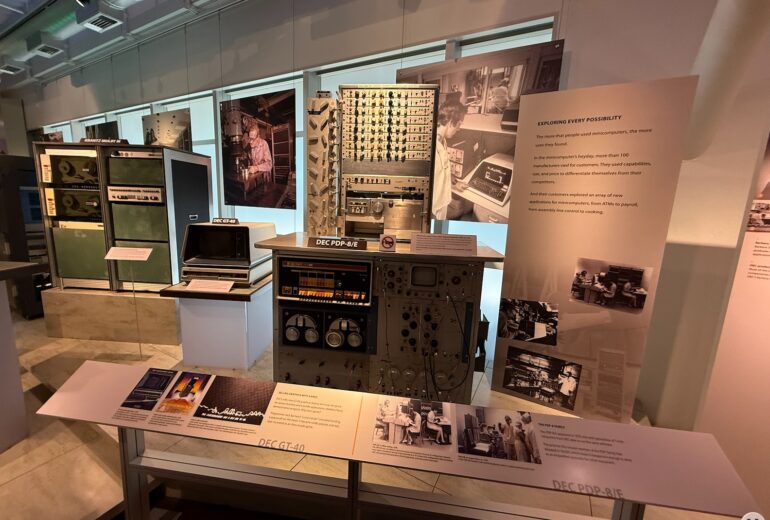

I was in Silicon Valley recently for the initial meeting of the University of Michigan Law School AI Advisory Council. With a little free time around that meeting, I did what many of us would do if given the chance and went to the Computer History Museum. I expected to enjoy it. I did not expect one section of it to linger in my mind long after the visit.

It was the section with the earlier hard drives and memory chips, more than anything else, that stopped me and got me thinking. There they were behind the glass: big hard drives, large memory chips, substantial boards crowded with components that once represented real capacity, real cost, and very real limits. They did not strike me as quaint. They felt instead like physical reminders of a discipline that may still have something to teach us.

Computing began under constraint. In that small part of the museum, you could see the constraints in a way that is harder to see now. Storage was expensive. Memory was tight. Access was slow enough that disorder had a price. You could not casually keep everything close at hand and hope the system would sort itself out for you.

That is what I found myself turning over as I moved through that section. Constraint was not just a technical condition. It may have been one of the great teachers of computing. The machines were smaller, slower, and more limited than what we have now, of course, but the more interesting point is that those limits forced people to develop habits of selection, structure, and retrieval. They had to think architecturally because they did not have much room for laziness.

They had to decide what mattered, what belonged where, what needed to be loaded now, what could wait, and what had earned the right to stay close. And somewhere in that discipline, I suspect, lies one of the deeper lessons of my visit.

That lesson, or at least the one I keep circling back to, has to do with indexing. So much of the current AI conversation still seems to assume abundance. Bigger context windows. More documents. More tools. More sources. More memory. More retrieval. The quiet assumption often seems to be that if the machine is not yet producing the answer we want, perhaps it simply has not been given enough. Add more material. Widen the window. Increase the supply. Sometimes that may be true. But I keep wondering whether, in many cases, it is exactly backward.

Early computing did not become dependable because it escaped constraint. It became dependable because it learned how to work intelligently inside constraint.

Limited memory forced a distinction between what was stored and what needed to be present now. Slow access forced attention to naming, order, and structure. Limited capacity forced a more serious question than “How much can we keep?”

The more serious question was, and may still be, “What can we find when it matters?” That is where indexing begins to look less like a technical detail and more like a governing idea. The problem is not whether information exists somewhere in the system. The problem is whether the right thing can be surfaced at the right time, in the right form, with enough traceability that someone can rely on it.

This may be one of the management errors in the current AI moment. We may be confusing accumulation with readiness, access with retrieval, and retrieval with judgment. A larger context window can create the feeling that a cognitive problem has been solved, when sometimes all that has happened is that disorder has become harder to notice. The machine has not necessarily become wiser. The clutter has simply become easier to hide.

That, at least, is one reason the museum hit me the way it did. Those older drives and memory boards were so physical, so bounded, and so obviously finite that they made visible something easy to miss in the present rhetoric around AI. More capacity is not the same thing as more coherence. A larger pile is still a pile.

If you give the pantry, the garage, and the attic to the machine all at once, you should probably not be surprised if the answer comes back with a certain leftovers quality. The sterner lesson from those historical efforts may be that useful systems learn to exclude well. What belongs in working memory, what remains in storage, what gets indexed, what gets ignored, what is staged for retrieval, what earns persistence, and what does not. Those are not just housekeeping details. They may be the real design decisions. And design, in the end, tends to become a management question.

This is one reason I find myself thinking that AI may still be immature in a very specific sense, not because the models are weak, but because we are still surrounding them with habits of informational gluttony. We ask them to ingest too much, too loosely arranged, too weakly ranked, and too poorly governed, then act surprised when the result is muddy.

The problem is not always lack of information. Sometimes the problem is too much badly organized information and too little discipline about what belongs in the room.

That is why I keep coming back to a thought that feels simple, maybe even old-fashioned. Persist broadly if you want, but show the model less. Build better indexes and more coherent packets. Most of all, build better paths into the material so we can actually find our way back. Name things so they can be found again. Separate canon from scrap. Separate what must be remembered now from what can remain available at a distance.

I left the museum thinking that scarcity may have taught computing a form of discipline that AI still needs to learn. Not the discipline of doing less with less, exactly, but the discipline of deciding better. Better staging. Better retrieval. Better respect for the difference between what is stored and what is needed now. Those old drives and memory boards looked large behind the glass, but the margins they enforced were small, and from those small margins came some of computing’s most durable habits.

That may be the part of the story worth carrying forward into AI. We talk constantly about bigger windows, larger context, more memory, and more power. Fair enough. But the question that stayed with me after that museum visit was a little different, and maybe a little more useful. Not how much more can the system hold, but whether we are getting any better at deciding what belongs in the room when the real work begins. Because if scarcity taught computing the value of a good index, a clean handoff, and a disciplined boundary around working memory, then perhaps the next step for AI is not simply to remember more. Perhaps it is to learn, with our help, how to forget better.

And that leaves me with one more question. If the real future of AI depends less on infinite memory than on better selection, retrieval, and exclusion, are we actually building intelligence into the work, or are we just finding more elaborate ways to hide the clutter?

[Originally posted on DennisKennedy.Blog (https://www.denniskennedy.com/blog/)]

DennisKennedy.com is the home of the Kennedy Idea Propulsion Laboratory

Like this post? Buy me a coffee

DennisKennedy.Blog is part of the LexBlog network.